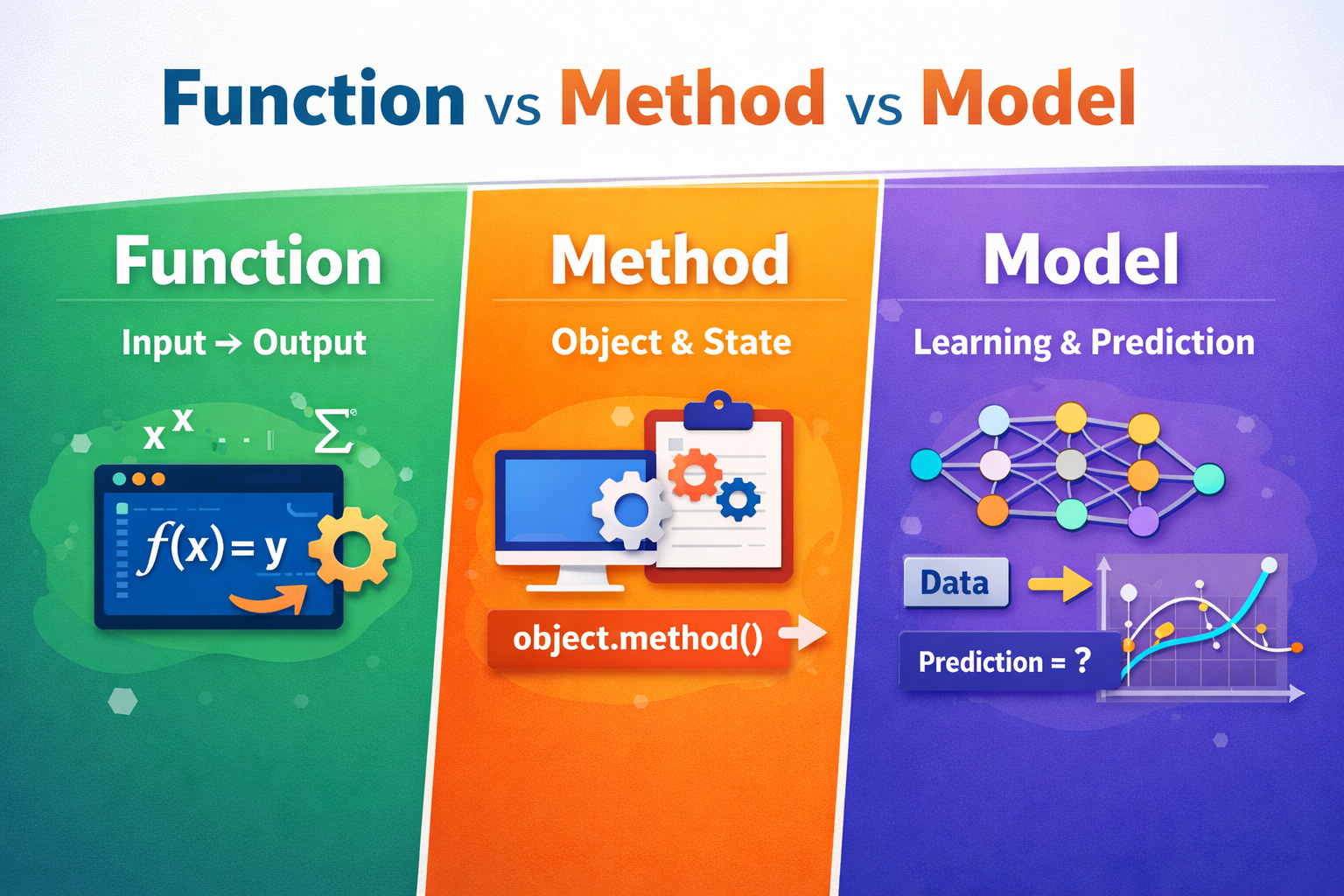

When people learn programming and machine learning together, three words start appearing everywhere: function, method, and model. They sound similar because all three take some input and produce some output. But they live in different “worlds” of thinking:

A function is the basic building block of programming (especially in procedural / POP style). A method is a function that belongs to an object (the core idea of OOP). A model in machine learning is a trained system that maps inputs to outputs using learned parameters (the core idea of ML). Understanding the differences will make your code cleaner and your ML concepts easier.

Functions in Procedural Programming (POP)

In procedural programming, the program is written as a sequence of steps, and functions are used to organize those steps. A function is a named block of code that can accept inputs (parameters) and return an output. The goal is reusability: instead of repeating the same logic in many places, you write it once and call it whenever needed.

Mathematically, a function is a mapping: for a given input, there is a defined output. In programming, this idea matches best when the function is pure, meaning it does not depend on hidden state (like global variables) and it does not create side effects (like printing, writing files, or using random numbers). Pure functions behave like mathematical functions: same input → same output.

Here is a simple POP-style function (Python example). It behaves like a mathematical function because it only uses its input:

def percentage(obtained, total):

return (obtained / total) * 100

print(percentage(450, 500)) # 90.0However, in real programs, many functions are not pure. A function may read the current time, call an API, use randomness, or modify a global variable. In those cases, the same input may not produce the same output, because the function is also using something outside the input.

Methods in Object-Oriented Programming (OOP)

A method is also a function, but it is attached to a class and called through an object. OOP is built around the idea that data and behavior should stay together. The object stores data (state), and methods operate on that state.

A method is not “different mathematics.” It is the same idea of input → output, but the method has an extra hidden input: the object itself (often written as self in Python or this in Java/C++). That means a method’s output depends on two things:

- the explicit arguments you pass, and

- the current state of the object.

So, a method is deterministic like a mathematical function only when the object state is the same.

Example: a BankAccount object has a balance (state). The method withdraw() depends on the balance and the withdraw amount.

class BankAccount:

def __init__(self, balance):

self.balance = balance

def withdraw(self, amount):

if amount > self.balance:

return "Insufficient balance"

self.balance -= amount

return self.balance

acc = BankAccount(1000)

print(acc.withdraw(200)) # 800

print(acc.withdraw(200)) # 600 (same input, different output because state changed)Notice something important: we called withdraw(200) twice. The input looks the same. But the output changed because the object’s internal state changed after the first call. This does not “break” mathematics—it simply shows that a method is a function of (state, input), not just input.

Also, just like functions, methods can be pure or impure. A method that only reads state and returns a computed value (like area() in a circle class) is often deterministic when state is unchanged.

Models in Machine Learning (ML)

In machine learning, a model is a system that produces outputs from inputs using learned parameters. You can think of it as a function with memory:

=f(x;θ)

Here:

- is the input (features),

- are the learned parameters (weights),

- is the predicted output.

A key difference from normal programming is that in ML, the rule f is not fully hand-written. Instead, the model learns parameters from data during training.

A model usually comes with two main actions:

- training (learning parameters):

fit() - inference/prediction (using parameters):

predict()

Here is a simple ML example using scikit-learn:

from sklearn.linear_model import LinearRegression

X = [[1], [2], [3], [4]]

y = [2, 4, 6, 8]

model = LinearRegression()

model.fit(X, y)

print(model.predict([[5]])) # ~[10.]from sklearn.linear_model import LinearRegression

X = [[1], [2], [3], [4]]

y = [2, 4, 6, 8]

model = LinearRegression()

model.fit(X, y)

print(model.predict([[5]])) # ~[10.]

Real-world examples

A POP-style function might compute GST:

def gst(amount, rate=0.18):

return amount * rateprint(gst(1000)) # 180.0

An OOP method might compute GST as part of an Invoice object, using its stored amount:

class Invoice:

def __init__(self, amount):

self.amount = amount def gst(self, rate=0.18):

return self.amount * rateinv = Invoice(1000)

print(inv.gst()) # 180.0

An ML model might predict whether an email is spam:

- input: email features

- output: spam probability or label

- learned from thousands of examples rather than hard-coded rules

Even if it is imperfect, it can still be deterministic once trained (same email → same probability), unless you retrain it or use stochastic prediction.

From a mathematical viewpoint, all three—functions, methods, and models—can be seen as mappings from inputs to outputs. The big differences come from state and learning.

- A function (POP) is a reusable block of logic. It matches mathematics best when it is pure.

- A method (OOP) is a function tied to an object. Its output depends on both input and object state.

- A model (ML) is a learned mapping. With fixed parameters it is usually deterministic, but training, updates, or stochastic inference can make outputs change over time.